Artificial Intelligence and data banks have continuously advanced and gained space in the competition for people’s attention and trust in recent months. With it, discussions which have existed since the early search engines and information centers resurfaced: Can their algorithms eliminate bias?

What has brought the most discussion on the topic is ChatGPT, which has been extremely successful and exposed a wide audience to the new capabilities of AI. However, the program has been accused from all sides; facing accusations of being both offensive as well as overly politically correct. This can be connected to the biases associated with it, where it finds its information from, how it learns and how it formulates its answer, stemming both from the way it was programmed as well as how internet search bases operate as a whole. This was shown with Elon Musk announcing that he would be making his own “TruthGPT” to compensate for the “threat” and “lies” of ChatGPT on Fox News.

To address possible bias first it’s important to differentiate AI, a predictive system capable of evolving and simulating human intelligence, and data banks, which have uncountable amounts of data that can be easily sourced and manipulated. However, the two are oftentimes used interchangeably and in unison, with Chat GPT itself being a data bank who utilizes AI to search and form its answers.

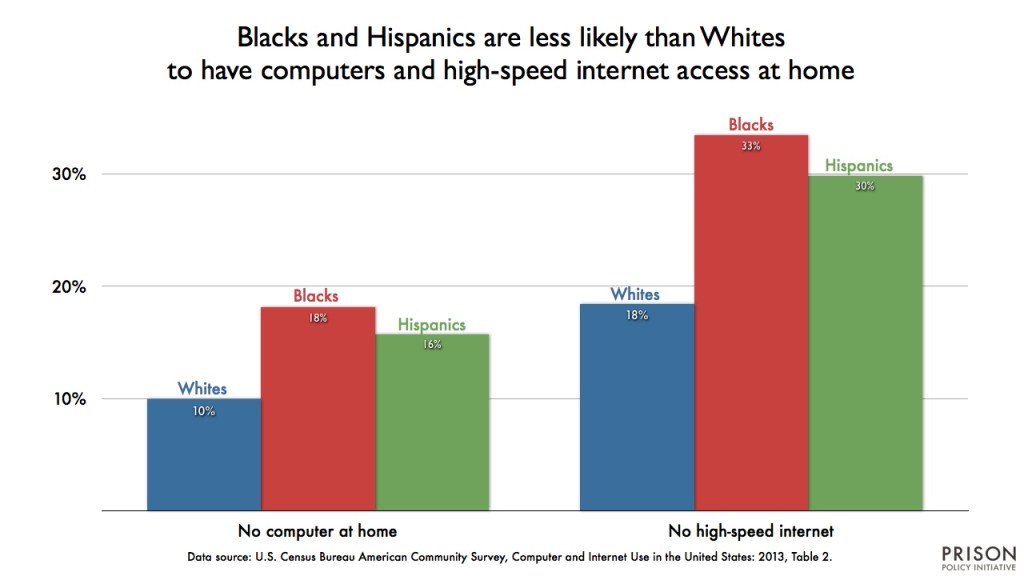

The accusations of bias are founded first on how ChatGPT gets its information. Although the platform seems to formulate its own answers, most of it is an extremely quick compilation of what has been said in regards to the question on the internet, conducting a search and synthesis much faster and broader than a human would. However, this also means that it is inherently susceptible to biases on the internet, such as influence as to who publishes information. Because of the information on the internet being mainly published by white men, who have historically had more access to the internet and academic recognition, other perspectives or cultures can be ignored. For example, when answering a question about an issue such as indigenous rights, the algorithm would be more likely to show information written by non-indigenous people rather than indigenous, simply because that is what is more common and responded to on the internet.

Secondly, the way the system offers and formulates responses makes it so that it can be accused of bias, despite having systems that work to avoid answering questions that may be charged with bias, there could actually be ideals integrated into its algorithm. If controversial questions are asked to the platform, such as ones with political context, it will answer only with a reminder of: “I’m designed to provide information and assist with a wide range of topics, including politics, while remaining neutral and unbiased.” People in both the political left and right have accused the algorithm of being coded with bias to the other side, framing answers in a way that it aligns itself with more liberal ideals or commenting on things politically incorrect.

While bias can be hard to identify on a platform that claims to bring almost boundless knowledge, it’s important to be critical and keep in mind these possibilities, even as AI advances people must be able to maintain their critical thinking and check the credibility of information.